Part 2: How machine learning, AI and automation could break the BI adoption barrier

If, as we saw in part one of this series, 77% of businesses are 'definitely not' or 'probably not' using analytics to its full extent and the adoption rate of analytics platforms is an abysmal 32%, something drastic needs to happen. Can the era of augmented analytics with its machine learning and AI fix this adoption issue?

To find out, we need an understanding of how we got to where we are with analytics in businesses to date. We need to know the strengths and pitfalls of each era of analytics to know if augmented analytics is really the answer.

How we got to the era of augmented analytics

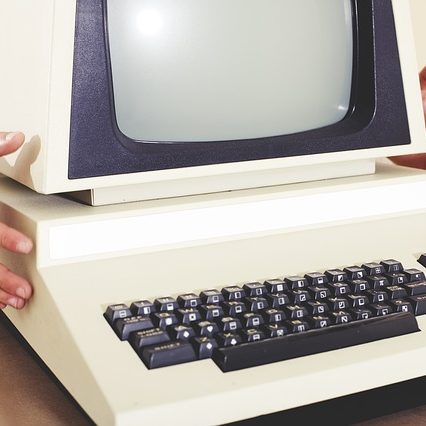

1985 - 2005: The tool of IT experts

BI started out as an elite and expensive IT tool that was incredibly complex to use. Due to the complexity and inflexibility, insights took weeks to unearth and could only be provided by IT experts. This meant that data and insights were also largely inaccessible, so very few people had the data available to them for business decision making. Only large enterprise businesses could afford to have data analysts. But this era was the birth of business reporting with dashboards as we know it.

2005 - 2015: Self-service analytics

By the mid-2000s, 'self-service' was the buzzword. Self-service analytics vendors gave rise to power users and data analysts who could operate independently of IT. The BI vendors enabled analysts to prepare, discover, and visualize data with less complex processes and with easier user interfaces.

However, the weakness of that period was the decentralization of the analytics, which meant a lack of governance. Little governance meant no oversight of where the analytics came from or the process taken to get insights, and that led to mistrust when multiple people produced different results from the same data. With these checks and balances absent, users weren’t adopting, partly due to a lack of trust. Although BI was more accessible than ever, adoption had hit a plateau.

Most businesses still operate in this era.

2016 - now: Augmented analytics

Recently, we have seen a new wave of analytics emerging that promises automation and instant insights for all. The trend has snowballed to include natural language search queries, machine-learning-generated analyses, and automated data discovery, like Yellowfin Assisted Insights. Within the next twelve months, we will see nearly every analytics vendor roll out automation, using machine learning and natural language interfaces, within their platforms.

Although this will be an enormous leap forward in delivering the data insights at the speed that businesses have always wanted, it comes with its own problem - transparency. What are the algorithms and underlying calculations being used? Can I trust the engine that it's built on?

If the automation behind insights and data discovery is a black box, trust in the numbers will remain very low, reminiscent of the ungoverned self-service era.

This will perhaps be an issue among analysts more than among business users who have become unquestioningly reliant on algorithms in many areas of life from Google search results to Facebook's timeline feed. For business users, the automation of insights that are delivered without even having to interact with an analytics tool could far outweigh any concerns they have over algorithms.

How we move to this wave of augmented analytics

Three market trends are driving this next wave of analytics: increased volume, greater variety, and higher velocity of data - the three Vs. These are particularly evident with the addition of the Internet of Things (IoT), which is collecting and producing enormous volumes of metrics.

There is also hardly a day that goes by without a new web data source, an API, or another slice of that customer 360° view being added to bring greater variety. And the speed at which data is transferred is phenomenal. To see it in action, you only need to pause shopping online and switch to social media where the very item you just considered purchasing appears as a retargeting advert.

With the three Vs of data, we now have the ability to ask a greater number of questions and more specific questions to find the answers we need to optimize the business. With this proliferation of data, we can shift our questions from "What happened?" to "Why did it happen?" But the 'why' is still the needle in the haystack and the haystack keeps growing.

The volume, variety, and velocity of data can be both the facilitator and the barrier to finding the value of the data because data analysis is still driven by manual data discovery, which requires the skill set of a data analyst.

The four blockers to BI adoption

In the next post, we'll look at the four underlying blockers to BI and analytics adoption so we can uncover how automation in analytics can tackle the problem.

Read Part 3 of this blog series >

< Read Part 1 of this blog series on BI adoption