Self Service BI: How to Make It Work for Your Enterprise

Self-service analytics, also known as self-service business intelligence (BI), means different things to different people. For some, it might just mean an interactive report which lets them drill up and down a hierarchy or maybe switch to a different metric.

For others, it might mean complete freedom to access anything in the company’s data warehouse in order to generate strategic insight. The common theme between these views is the desire to get the job done, without having to wait for anyone to help out.

Why is that so important? To put it simply, “Time is money!” and the time spent waiting for someone to provide a report or data extract is often dead time and wasted money.

It’s worse than that though, because very often the person who ultimately provides the data or report is often overqualified and overpaid for the task. They would rather be answering complex questions and adding more value.

A “self-service” initiative should therefore be trying to do two things: first, to empower people to get the answers they need without having to wait around and second, to make sure your data analysts and data management team are focussed on high value tasks such as adding new data sources, solving complex problems and creating reusable assets such as high quality data models, semantic views, data glossaries, etc.

With me so far? Great, so why isn’t everyone doing this already? Let’s see.

Why is self-service BI hard, and what can we do about it?

I’ve worked in and around BI and data management for three decades, and it’s been clear to me for some time the biggest problem (or as I prefer to see it, opportunity) in BI is not data, but process. It’s why although I love data, data modelling and creating data insights, I’m still a Solution Architect rather than a Data Analyst or a Data Scientist.

As a Solution Architect, my job is to see the big picture and propose solutions which balance the various concerns of the organization. I’m talking about business concerns, not data management or business intelligence concerns. We must always remember why we’re doing data management and business intelligence.

Business intelligence is the process of collecting, analyzing and presenting data to support business decision-making and improve organizational performance.

Fair warning: you may not agree with what I’m about to say, but I encourage you to hear me out.

I would argue that all business decisions are made in the context of a business process.

I would further argue that the reason business intelligence is so hard to get right is that we don’t put enough effort into modelling our business processes.

Effective business intelligence, in my view, boils down to this:

- Identify/select a business process

- Create a SIPOC model for the process (Suppliers, Inputs, Process(es), Outputs, Consumers)

- Identify the decision points in the process(es)

- Provide appropriate Decision Support (the old-school name for business intelligence!)

- Repeat

That’s probably a bit of an over-simplification, but the point I want to drive home is that you can only provide effective business intelligence solutions if you fully understand the business context.

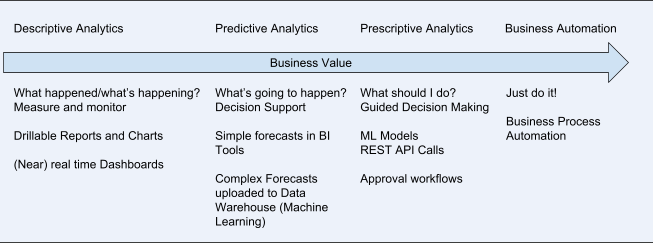

Now, to my way of thinking, modern BI is a spectrum like this:

If you do BI this way, then you won’t have to worry too much about how to apply role-based access control to your BI solution, because most people will only consume BI in the context of the business processes which are part of their job.

However, you may be thinking, “That’s great, but what about my Insight Team? They say they need access to all our data so they can come up with the best insight.”

My answer to that is related to my assertion about business decisions, but may be even more controversial!

Giving your Insight Teams unfettered access to your data and leaving them to come up with insight is like Scrapheap Challenge where you neglect to mention what you actually want the teams to produce. Sure, they might come up with some cool stuff, but how likely is it that it’s going to take your business forward?

To get value from your Insight Team, you need to establish the following:

- Be clear about what information you want

- Discuss what resources (data) are available

- Set boundaries which reflect company policy, GDPR and any applicable industry regulations

There’s no point giving users data which you’re not allowed to use, and you can probably save a whole lot of time by steering them towards the data you think is most relevant.

How will you know what’s relevant? Hint: SIPOC! The brief should probably be along the lines of: “Process X has a decision point in it and we need a recommendation engine to help make the decisions. This is the information we have available. Here’s some historical information about inputs, decisions and outcomes. See what you can do.”

To minimize the risk of data breaches, you’ll probably want to anonymize any personal data, but in a way which doesn’t lose the information you need. For example, you may have used a one-way hash: this approach maps source keys to target keys in a way which ensures a specific source key is always mapped to the same target key, but there’s no practical way to get back the source key from a target key. Alternatively, you could use a tokenization approach, where source keys are replaced with tokens which can be mapped back to the original key, but access to the mapping table is tightly controlled (and certainly not given to the Insight Team!)

It’s worth noting that both of these approaches are implemented in the data layer, not the BI tools. That is deliberate and means that even your BI tool administrators don’t have access to your sensitive data (which isn’t only personal data by the way).

Still with me? Great, then let’s take a closer look at some of the features of modern BI tools which facilitate self-service BI and how to use them effectively.

It’s all about the “Information Consumers”

Let’s take another look at the “BI Spectrum”. You may have noticed that the further right you go on the spectrum, the less “traditional BI” there is.

There’s a reason for that. It’s hard to find people who really understand data and anything beyond very simple statistics, but many businesses have a creative dimension which still requires a lot of people and those people have to make decisions.

What we need to do is to employ a small number of people who really do “get” data, statistics and nowadays machine learning and put them to work creating reusable assets which help all those “creatives” to make better decisions (from a business perspective).

In that way, the value of the work done by your data and insight professionals is multiplied many times over.

In my view, that has implications for your BI tools. You don’t really want your “information consumers” to know that they’re using a “BI tool”. You want your BI tools to work behind the scenes to deliver content right into your line of business applications, providing insights, nudges and recommendations in the context of your business processes.

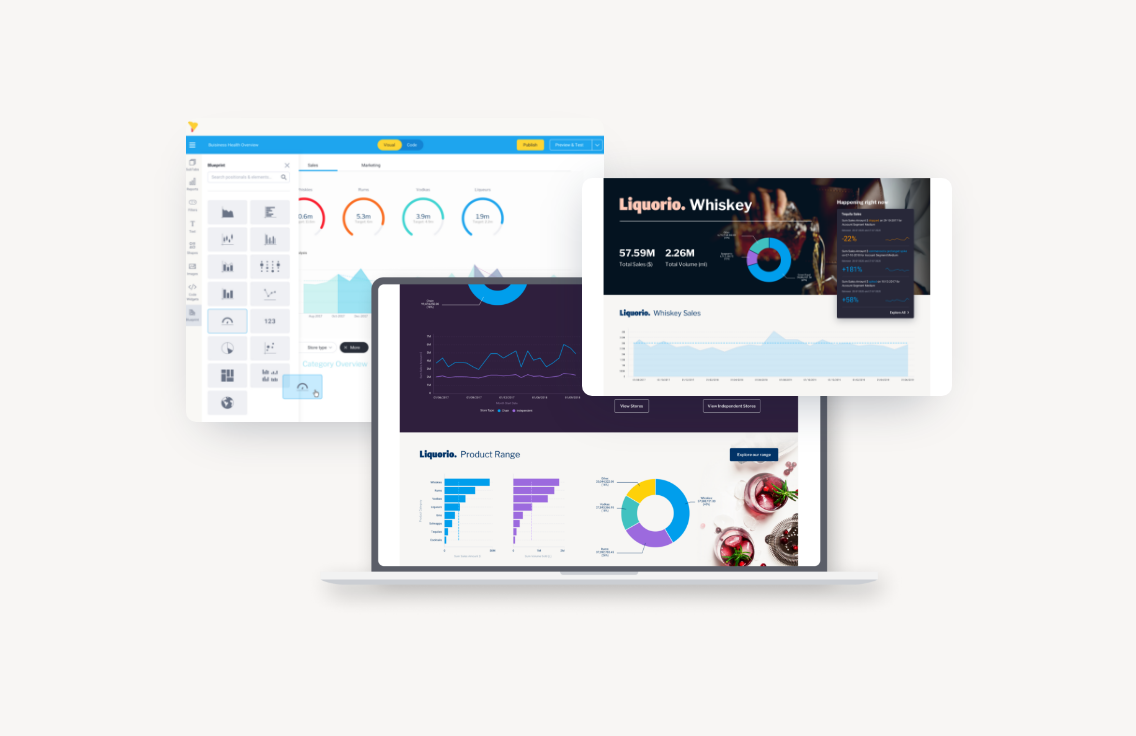

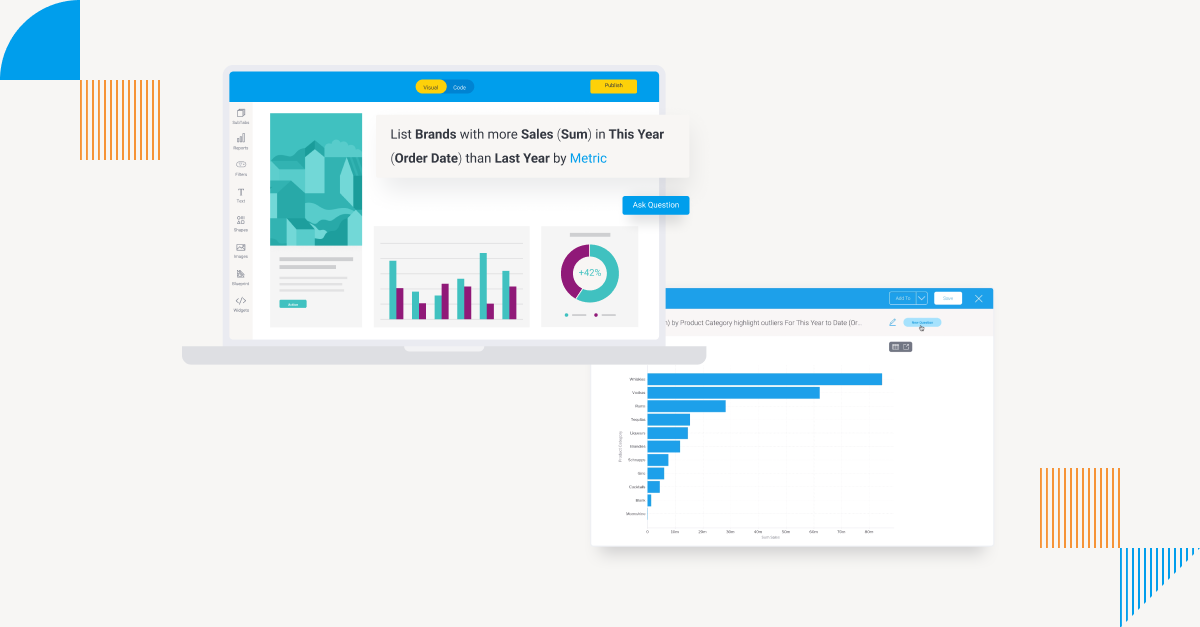

In other words, you want your BI tools to be fully embedded in your line of business applications. Yellowfin is particularly strong in the area of embedded analytics, with features such as multi-tenancy, action buttons and code mode designed to give you complete flexibility.

When I said that all decisions are made in the context of a business process I meant it, but of course I recognize that not all “business processes” are rigidly defined. It’s quite common to classify business activities as either Planning or Execution. Processes on the Planning side are often rather loosely defined (perhaps too loosely, but that’s not the focus of this discussion). Consider the process for planning a new Marketing Campaign. At a high level, planning a Campaign can be thought of as a set of interrelated decisions:

- What do we want to promote? (Products or services)

- Who do we want to promote it to? (Customer segment(s) or prospects)

- What “mechanic” shall we use? (Discounts, gifts, prize draw, etc)

- Which channels should we use? (Email, social media, online advertising, etc)

The process for answering these questions may be quite flexible, but it’s likely to involve data and it may well be collaborative.

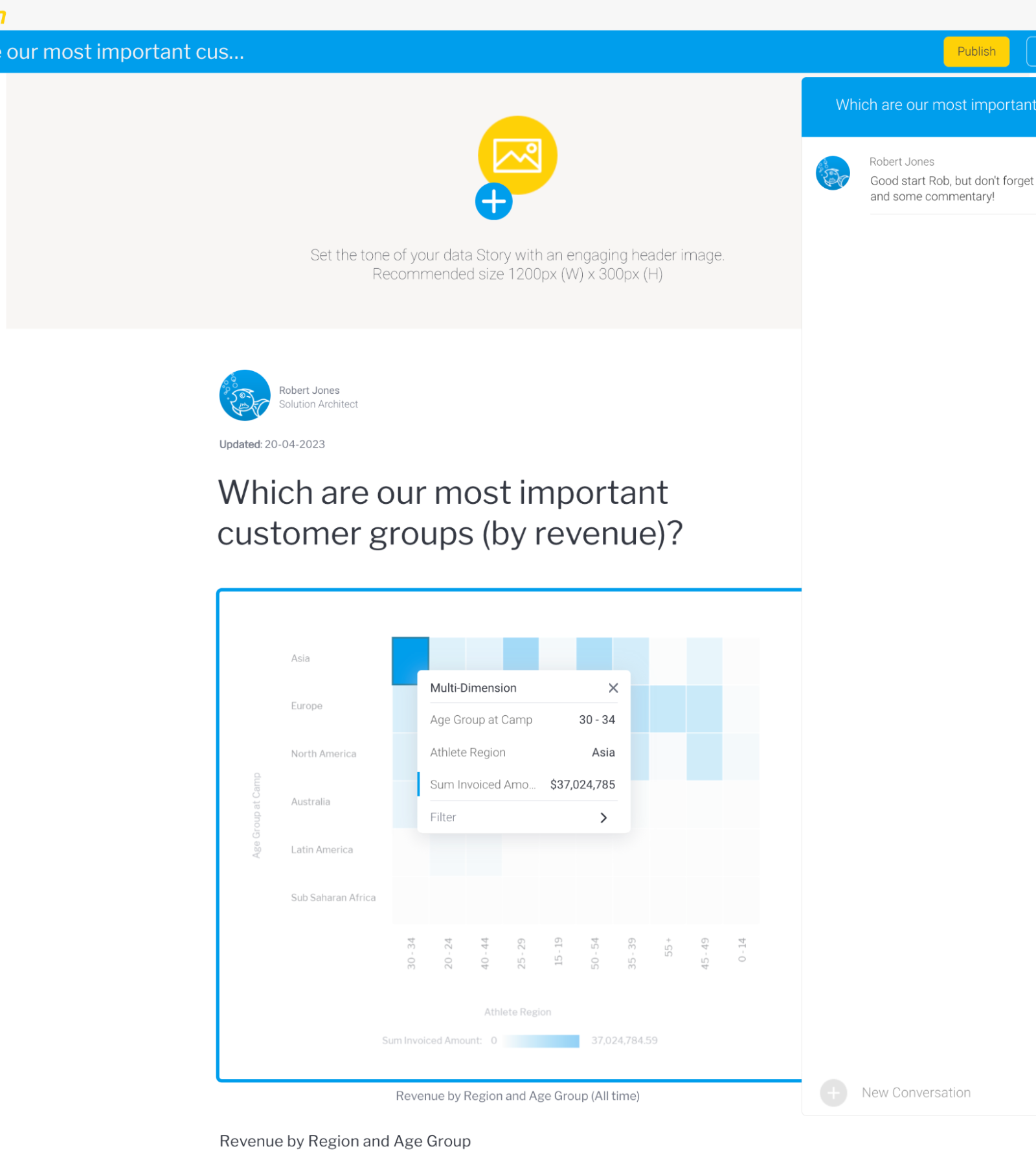

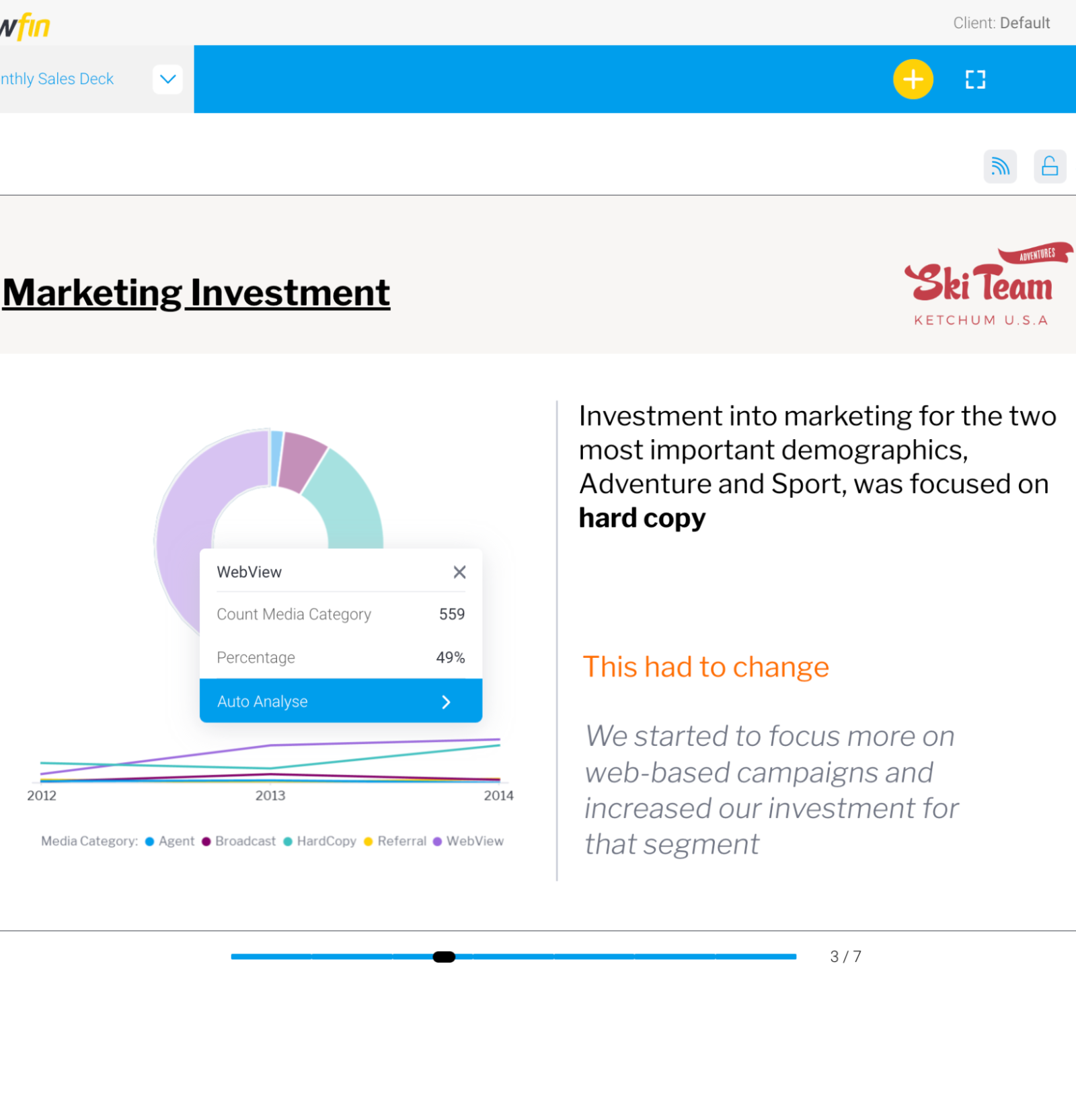

This is another Yellowfin “sweet spot”, thanks to our Stories and Presentations data storytelling features. Stories can be thought of as data-centric blogs, while Presentations are more like PowerPoint decks, but both can include interactive reports and charts. Stories also have a comments feature like a blog, which could be used to discuss an idea for a campaign, for example.

Or, if your process is a bit more rigid, you could create a Presentation template which includes all the charts required to pitch a campaign, with parameters for products/services, channel, mechanic, etc.

But can our creatives “self-serve” the charts and reports for their Stories and Presentations?

The answer is yes, as long as you’ve put in the work to figure out what kind of data they’re going to want and made it easy for them to use with a well-constructed semantic view (only the fields they really want, no cryptic names, good help text, etc).

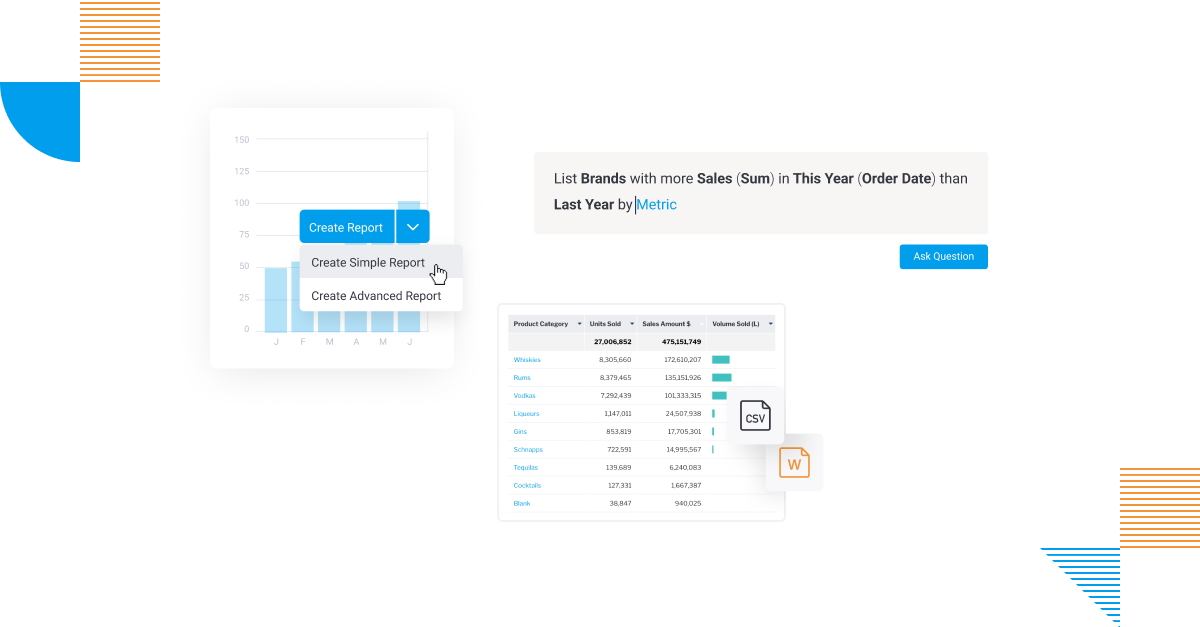

If you’re using Yellowfin then we can go one better with a special feature called Guided NLQ, which helps users to construct the most common query types using a simple point and click interface and then displays the results in an appropriate chart. The chart can then be edited further using the full functionality of the report builder if required, but the default chart is often good enough.

Conclusion

Successful “Self-service BI” is not about getting rid of your BI and Insight teams. It’s about ensuring that they’re focused on the most value-adding tasks. For the BI Team, that’s creating reusable assets which really support business decision making. For the Insight Team it’s about using data to help you solve your hardest business problems.

From a BI tools perspective, it’s about embedded BI and data-centric collaboration.

About the Author

Rob is a seasoned IT professional with more than 30 years’ experience of working in a variety of Development and Architecture roles.

Rob has worked with a wide range of data management technologies and tools including databases, integration tools, data modelling and data governance tools and a host of adjacent technologies including Identity and Access Management, Process Modelling, Cloud Platforms, Networking, Enterprise Architecture and many more.

Prior to joining Yellowfin, Rob was Solution Architect on a major project to develop a new Data Platform for the John Lewis Partnership (a major UK retail organisation), hosted on the Google Cloud Platform and including Google Cloud Storage, BigQuery, Snowflake and Looker as well as Collibra, all integrated with Ping Federate SSO.

Self-Service Analytics: The Best Enterprise BI Solution

Learn how Yellowfin can provide efficient, streamlined, self service analytics for your enterprise use cases - try our free demo today.