Best Practice Guide

The ultimate step-by-step guide for analytic content creators.

Data Optimization

-

One of the most common causes of slow performance is not having an environment that is optimized for analytical workloads. This includes the infrastructure upon which you deploy, as well as the databases you are using to report from.

There are four key areas of data performance that fall under data optimization:

- Hardware

- Databases

- Data model

- Data volume

-

Hardware

Updated 6 September 2024Ensure that the hardware you have deployed is appropriate for your use case and concurrent user volumes. This information should be readily available from your database provider. Yellowfin minimum server specifications are located here.

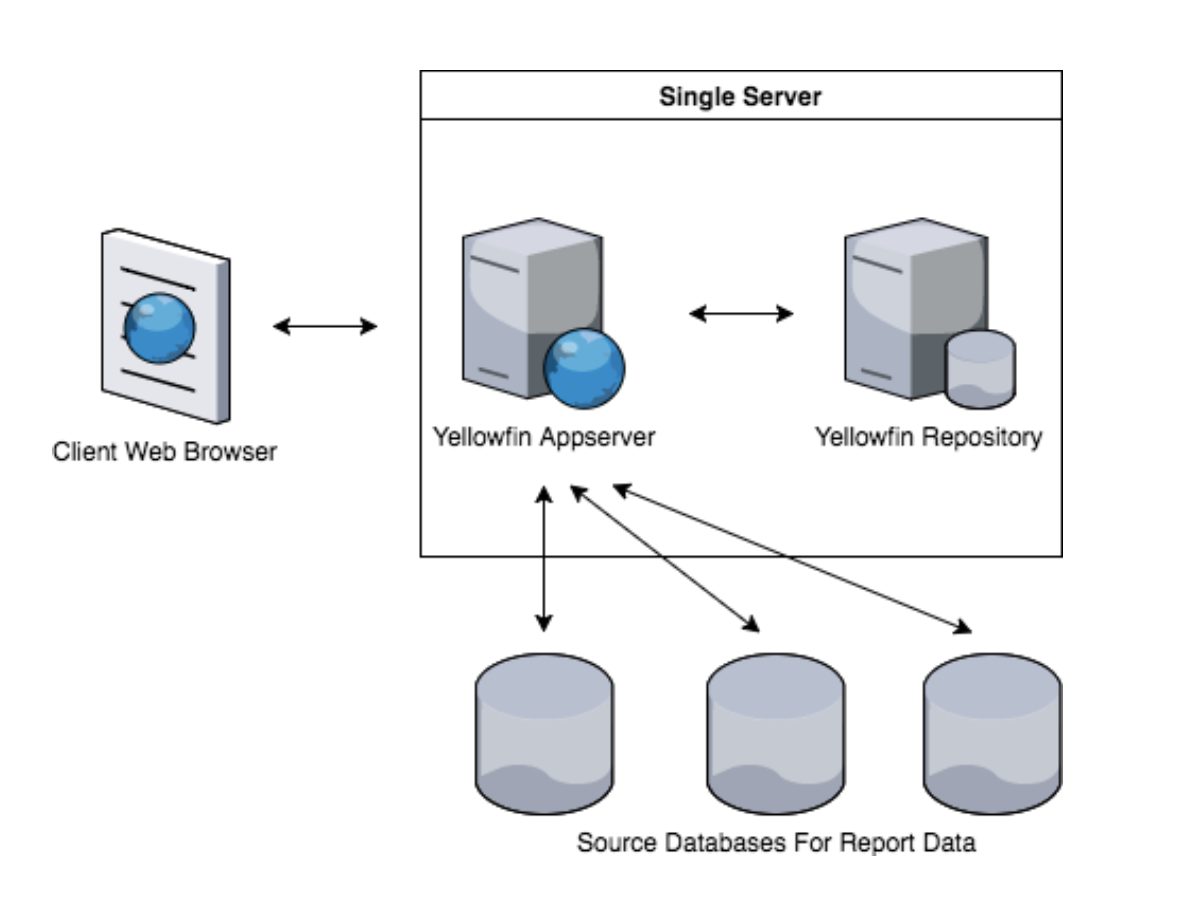

It is recommended you separate servers for your BI and analytics front-end from your database layer.

-

Databases

Ensure the database you select for storing your analytical data is fit for purpose. Databases such as MySQL or SQLServer are more appropriate for transactional database use cases than for analytical ones, as an example.

If you need to analyze very large amounts of data at a granular level, then you should ensure you use a database designed for that specific workload – Exasol, Redshift, Snowflake, etc.

-

Data Model

Check the data model – the table structure in which your data is stored – is optimized for the queries you wish to run. Often databases have been designed to optimize transactional workloads, which means they are great for running an app, but poor for running reports. Simply connecting to your transactional app to run reports, directly from the source, may not be ideal.

You should consider extracting your data from a transactional database and moving it into a database with data structures designed for querying.

Also consider table structure, join complexity and primary/foreign keys. If your data model is overly complicated, and or not indexed appropriately, the workload of the database will increase.

-

Data Volume

Increase performance by limiting the amount of data that is available for querying. There are two primary methods of doing this:

- Limit by date – remove all records that are more than n years old

- Use Data Aggregation – aggregate data into daily or weekly summaries

Deciding what data to limit is driven by the analytical needs of the business. When planning dashboards and reports, include reference to the period for which you need to enable analysis.

Generally, as a rule of thumb, a minimum of 13 months’ data is required to provide a user with the ability to understand trends in their data. Ideally for trend analysis, the ability to go back 3 years would be preferable. If the data is only to be used for day-to-day operational decision-making, the timeframe would much be shorter.

Limiting date is simple and retains a high level of granularity, but limits the span of trends that can be analyzed. Aggregating data on the other hand, reduces the level of granularity, but enables you to keep a longer span of dates.